AIStorm, an Edge artificial intelligence startup, introduced a family of semi-custom solutions for mobile handsets, IoT, and advanced driver-assistance systems (ADAS) at the MWC 2019 in Barcelona.

Read more PlayerData EDGE Provides Real-Time Data on Biometrics and Performance of Players

Due to its unique charge-domain processing architecture, AIStorm can outperform digital GPU-based solutions, delivering edge-ready solutions at 5x to 10x lower cost.

AIStorm CEO David Schie sees a huge opportunity to outperform approaches that need to digitize sensor data, a step that introduces latency and the potential to miss important information, said a press release.

“Many industry players are focused on deep submicron GPU/NPU-based solutions to accelerate AI at the edge. We believe that such solutions are not compatible with the real-time processing, power, and low-cost requirements of these applications,” said Schie. “Today, we are reshaping the landscape by enabling complete solutions that include a sensor, an analog front end, and AI processing at low cost, and suitable for even the smallest form factors — without the need to digitize input data.”

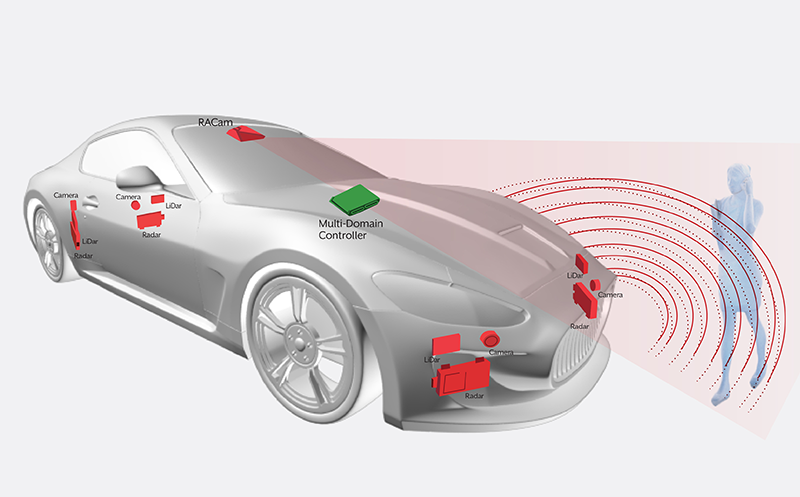

AIStorm initially targets two huge markets, mobile/wearable and ADAS:

- AIStorm IoT Vision/IoT Waveform Solutions. Designed for imaging and HID/biometric applications in mobile phones, cameras, wearables & IoT applications, AIStorm offers AI-in-sensor solutions for tasks such as fingerprint sensing, gesture control, heart monitoring and heart-based identification, occupancy sensing, facial recognition, voice input, earbud, drone imaging, image stabilization, and security and intersection cameras.

- AIStorm ADAS Solutions. Designed for advanced driver-assistance systems, AIStorm introduces CIS, SPAD/SiPM and SiGe sensors coupled to its analog AI engines, which eliminate the need for digitization and allow continuous processing of incoming data. Other solutions include gesture control, eye-blink monitoring, voice, and wavelet-based failure detection.

Read more Kai – A Revolutionary Gesture-Based Workflow Automation Device Launches on Crowdfunding Site

According to AIStorm, its SoCs are better option because they can process data directly from sensors while it’s still in its native form, eliminating the need to convert data into a digital format. The processed analog data can then be used to train Artificial Intelligence and machine learning models for a wide variety of tasks.